On measuring product impact

Mixpanel’s performance self-evaluations ask employees two questions:

- What did you accomplish?

- What was the impact of each accomplishment?

As a product manager, the first question is simple. The list of products and features your team released will do the trick.

But the impact… the answer to that question has consequences. Demonstrating positive impact validates your team’s approach, and suggests that further investment in that area is warranted. Conversely, identifying negative impact means it’s time to go back to the drawing board, and avoid fruitlessly investing further in that direction. Understanding impact can drastically change the priorities of any product team.

And while assessing impact is extremely important, it is also extremely difficult to actually do.

The pitfalls of existing impact analyses

Until now, product teams had two options to assess the effect of their product launches on their key metrics. Unfortunately, though, both of these methods are flawed.

One method is simply to see how key metrics change after the launch. If a metric improves after the launch, you might be tempted to attribute that change to the launch. But, there’s no way to be certain that it was in fact the product that caused the change. Maybe marketing released a new campaign that drove engagement. Or perhaps it’s simply the start of your company’s busy season. There are simply too many confounding factors to be able to trust the results of this method.

The other method is to A/B test the feature. A/B testing will give you a scientific, reliable result, but it has its own disadvantages. A/B tests are time consuming to prepare, deploy, and evaluate, slowing down development. Even more problematically, half of your customers have a valuable feature withheld from them until the results of the test come in. In short, A/B tests hamper your ability to deliver value to customers as quickly as possible.

So, how can you get a reliable assessment of impact without slowing the development process?

The power of propensity matching

The answer is a causal inference technique called propensity matching. Propensity matching offers statistically sound, reliable assessments of the effect of product launches without the need for A/B testing. Without teaching an entire statistics course, here’s how it works:

When assessing a new feature with propensity matching, the first step is to break out users into two groups: “adopters,” the users who have tried the new feature, and “non-adopters,” the users who haven’t. In a basic form of this analysis, it’s possible to compare how the adopters and non-adopters perform in terms of key metrics, but this would still be flawed due to a phenomenon known as self-selection bias. In product analytics, self-selection bias appears as the tendency for your most active users to not only perform your key events frequently, but also to try out new features more often as well. In other words, without any correction for self-selection, the adopter group will tend to be filled with your most active users. Unsurprisingly, those adopters would have better numbers than the non-adopters — a misleading result.

That’s where propensity matching comes in. Propensity matching uses a machine learning model to identify sets of users who have a similar likelihood, or propensity, to use the new feature, and buckets them into groups. Within each propensity group, the model will compare the behavior of adopters and non-adopters to calculate the delta between them. Finally, it takes the average of the deltas from each propensity group, weighted by group size, to determine the overall impact of the feature. A 95% confidence interval serves as the cherry on top, to confirm whether or not the result is statistically significant.

In short, propensity matching enables you to derive precise assessments of impact via the observational data you already gather — no cumbersome testing required.

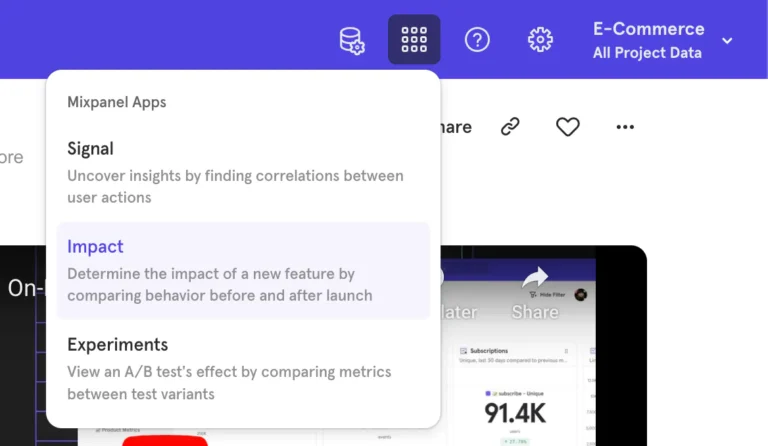

Measuring impact just got a whole lot easier

Now, if all that sounds a little complex, don’t worry — Mixpanel has you covered: we’ve built propensity matching into our brand new Impact Report. This report is one of a kind in the product analytics industry, and it makes it a snap to assess the impact of both your new and past product launches. Results you can trust, without slowing down development.

This powerful tool will help you quickly and accurately determine the impact of your product launches. We hope you’ll use the Impact Report to demonstrate your teams’ value, rapidly make decisions of where to invest next, and increase the rate at which you can deliver value to your own customers.