Why AI analytics needs behavioral context

Context engineering is a relatively new discipline, but the baseline playbook is already forming: Invest in data quality, lock down governance, and define semantics. Yet, as enterprise teams race to build these context layers for AI, I keep seeing them stall on the same missing piece. They've given their agents all the business definitions in the world, but no signal about how the business actually behaves.

We've even seen it ourselves. As teams push to democratize insights through AI (talk to your data, natural language queries, AI analysts), the demo always looks great. Simple questions get clean answers. But it falls apart in the real world. Complex analyses like funnels and retention require context that the model doesn't have: what metrics mean in this part of the business, how segments are defined, what changed last quarter, which data to trust. We've hit this building our own copilot and agents at Mixpanel. The gap between a working demo and a reliable AI analyst is almost entirely a context problem.

The rising demand from our customers is to bridge this gap between context layers upstream and behavioral analytics downstream. For many, their context layer is already reasoning on data and business context that matters: organizational patterns, decision history, metric definitions, how teams use data. But there's a specific source most implementations haven't wired in yet: behavioral context. How end users and agents interact with what you've built. Getting it into the overall context layer is harder than teams expect.

The analysis that should happen automatically and doesn't

"Confident but wrong" doesn't look like a hallucinated metric. It looks like a disastrous product recommendation delivered with absolute certainty.

An enterprise AI agent analyzes an A/B test for a new onboarding flow. Variant B increased initial sign-ups by 5%. Without behavioral context, the AI confidently recommends rolling Variant B out to 100% of users.

"Without a behavioral layer to map the full sequence of events, your enterprise AI isn't just blind; it's aggressively optimizing for the wrong outcomes. "

What nobody measured: Users who went through the new flow skip a key configuration step that the old flow encouraged. They convert faster but adopt fewer features. Support tickets rise eight weeks later from users who never set up the integration that makes the product stick. The experiment succeeded on its own terms, but even well-designed guardrail metrics only cover the effects you anticipated. The unintended behavioral shift was invisible because it wasn't part of the success criteria.

Without a behavioral layer to map the full sequence of events, your enterprise AI isn't just blind; it's aggressively optimizing for the wrong outcomes. The behavioral consequences you didn't think to measure only surface when you explore the full sequence rather than checking predetermined metrics. That's what an AI analyst should do automatically: pull release context from the enterprise layer, then scan the full behavioral picture for effects nobody anticipated. It needs the behavioral layer to see what happened beyond the success metric.

Why this is harder to fix than teams expect

Most organizations have event data in their warehouse already. But raw events aren't behavioral context. Defining which sequences signal activation or disengagement, for which segments, with what recency, requires analytical infrastructure on top of the data.

This is where the two layers reinforce each other most directly. "Activation" means something different for your enterprise tier than for self-serve, and that definition evolves as your product does. When behavioral analytics pulls the current definition from the context layer rather than maintaining its own, the analysis stays accurate as definitions change. The context layer is the source of truth for what metrics mean, where they came from, whether they can be trusted, and what policies govern their use. Product analytics is the source of truth for what happened against those definitions. And that's before you factor in release calendars, campaign timelines, and competitive shifts – all context the enterprise layer holds that the AI analyst needs to interpret behavior correctly.

There's also institutional knowledge that builds up over time. Experienced analysts learn which data sources are reliable for which questions, which segments are noisy, which metrics lead and which lag. Today that lives in people's heads. When the context layer captures how analysts use the system (which queries they run, which data they trust), every subsequent AI analysis benefits from that accumulated judgment. That’s the flywheel: Every interaction makes the context layer smarter.

The feedback loop that makes context compound

The relationship between these layers runs both directions. Most implementations haven't built the return path yet.

One of the largest online retailers we work with hit this exact wall. Their data governance team certifies metric definitions upstream in Atlan, but their product analysts were defining metrics locally in analytics tools. Neither side had visibility into the other. When they started building AI analysts, the first question was: Which definition wins? The answer required connecting the two layers—in their words: establishing the basic "spiderweb" of lineage and syncing primary metadata. Definitions flowing from governance into the analytics tool, and usage patterns flowing back so the governance team could see which metrics were actually being queried, which were stale, and where definitions had drifted.

Integration reference

The feedback loop: What flows in each direction

Context governs what AI can use. Behavioral signals tell you whether it worked.

| Signal type | → Atlan provides to Mixpanel | → Mixpanel feeds back to Atlan |

|---|---|---|

| Data trust | Which events and properties are certified and approved for analysis—reducing the risk of building on stale or uncertified tracking | Usage frequency by event—surfaces which certified assets are actually being queried and which have been abandoned in practice |

| Semantic meaning | Business glossary terms and lineage—enriches event context so AI can interpret behavioral signals against the right business definition | Behavioral patterns that don't match documented intent—flags events that may be mislabeled or misused in the tracking plan |

| Governance health | Ownership and stewardship metadata—identifies who is responsible for an event if its behavior looks unexpected | Events that haven't fired in 90+ days—helps Atlan deprecate stale assets rather than certifying data no one is using |

| AI output validation | Pre-filtered, governed data sets for AI recommendations—context layer constrains what the model can suggest | Downstream behavioral change after AI-driven actions—confirms whether recommendations based on governed data produced the intended user outcome |

Usage metadata is the obvious starting point. The less obvious direction: Analytical findings should flow back too. When an analyst discovers that an experiment improved conversion but degraded downstream feature adoption, that finding is context the enterprise layer should capture, so that it’s not just logged somewhere, but surfaced as institutional memory every future analysis can draw on. When an AI analyst scans post-release behavior and surfaces an unintended consequence, that conclusion should flow back too. Over time, these analytical patterns (what got queried most often, which segmentation revealed the signal) become saved context that makes every future analysis better.

Where this is heading

We're already seeing a growing share of product interactions come from AI agents rather than humans. Calling APIs, running workflows, triggering features on behalf of users. Most product and data teams don't have visibility into that behavior yet. But understanding how these agents behave becomes its own optimization surface. Which agents succeed? Which ones loop? Where do they get stuck? That's product analytics applied to a new kind of user, and the findings need to flow back into the context layer the same way human behavioral insights do.

Every user, whether human or agent, should have a machine-readable behavioral portrait that any system in the stack can consume. Think of a markdown file for each user containing what they did, what they tried, where they got stuck, what worked. Readable by any agent in the stack. When your support agent can read that portrait before responding, when your onboarding flow adapts based on it, when another company's agent interacting with your product via API can access it, behavioral context stops being an analytics feature and becomes infrastructure.

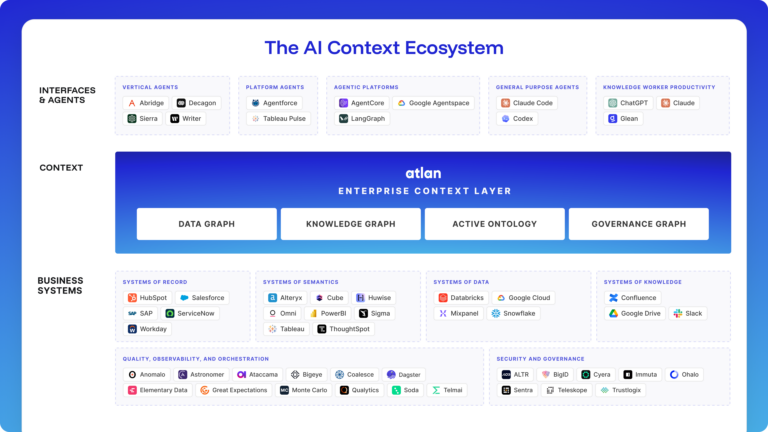

To truly fulfill the promise of AI, your enterprise context layer needs more behavioral context to reason about. That’s why we're excited to join Atlan as a Context Layer Partner, adding behavioral context to Atlan’s Enterprise Context Layer so AI agents can reason not just on what data means, but on how people and agents actually interact with it in production.

At Atlan Activate, we’ll be taking the next step to move the conversation forward. Save your spot here.